The initial version of RCCLX, an enhanced variant of RCCL, has been open-sourced. This version was developed and rigorously tested on internal workloads. RCCLX seamlessly integrates with Torchcomms, aiming to provide researchers and developers with tools to accelerate innovation across various backend choices.

AI model communication patterns and hardware capabilities are continuously advancing. The goal is to enable rapid iteration on collectives, transports, and new features specifically on AMD platforms. Previously, CTran, a custom transport library for NVIDIA platforms, was developed and open-sourced. With RCCLX, CTran has been integrated into AMD platforms, introducing the AllToAllvDynamic, a GPU-resident collective. While not all CTran features are currently part of the open-source RCCLX library, their availability is anticipated in the coming months.

This post focuses on two new features: Direct Data Access (DDA) and Low Precision Collectives. These innovations deliver substantial performance enhancements on AMD platforms.

Direct Data Access (DDA) – Lightweight Intra-node Collectives

Large language model inference involves two distinct computational stages, each with unique performance characteristics:

- The prefill stage processes the input prompt, which can contain thousands of tokens, to create a key-value (KV) cache for each transformer layer. This stage is compute-bound, as the attention mechanism’s quadratic scaling with sequence length demands significant GPU computational resources.

- The decoding stage then uses and incrementally updates the KV cache to generate tokens sequentially. Unlike prefill, decoding is memory-bound, with memory I/O time often dominating attention time, and model weights and the KV cache consuming most memory.

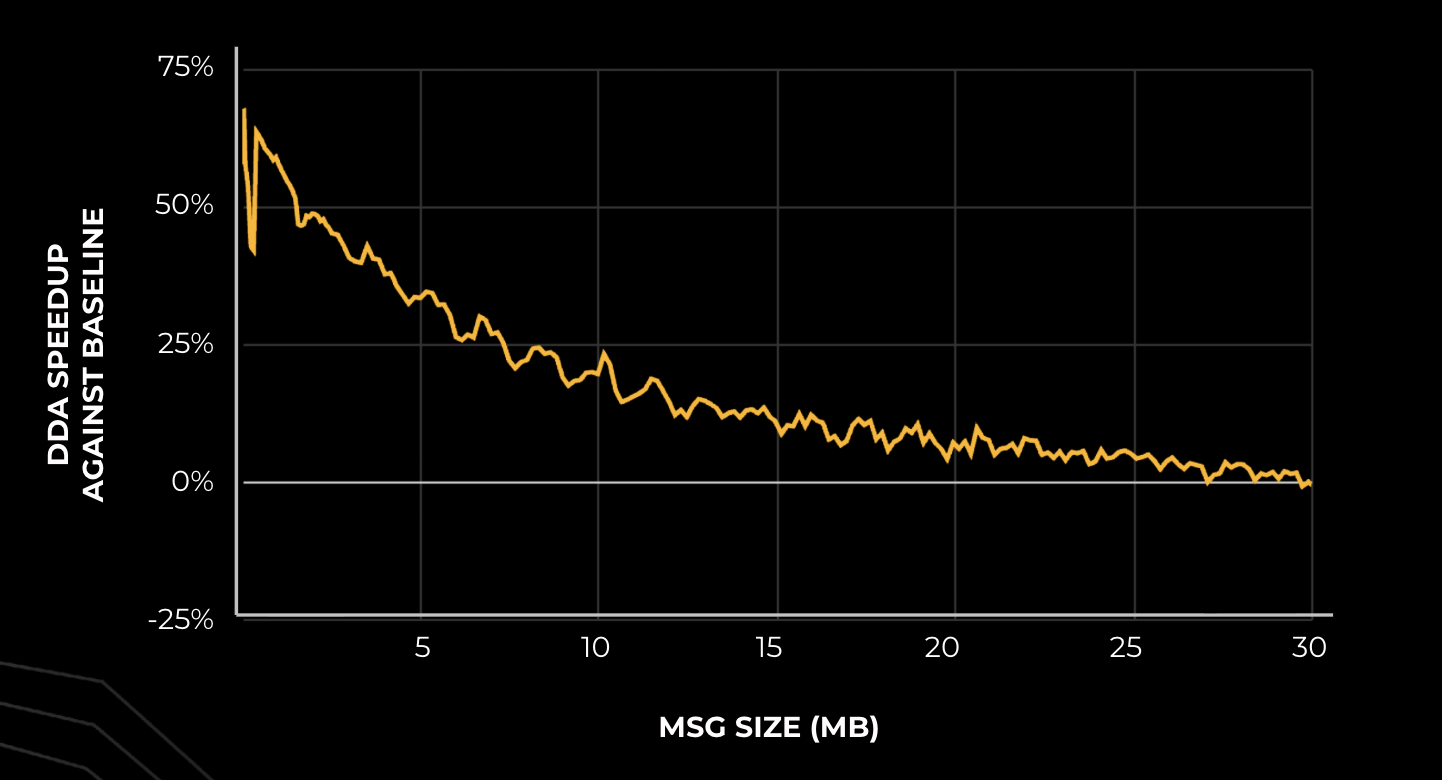

Tensor parallelism allows models to be distributed across multiple GPUs by sharding individual layers into smaller, independent blocks that execute on different devices. A significant challenge, however, is that the AllReduce communication operation can account for up to 30% of end-to-end (E2E) latency. To mitigate this bottleneck, two DDA algorithms were developed:

- The DDA flat algorithm enhances small message-size allreduce latency. It enables each rank to directly load memory from other ranks and perform local reduce operations, thereby reducing latency from O(N) to O(1) by increasing data exchange from O(n) to O(n²).

- The DDA tree algorithm divides the allreduce into two phases (reduce-scatter and all-gather). It employs direct data access in each step, transferring the same amount of data as the ring algorithm but achieving a constant factor latency for slightly larger message sizes.

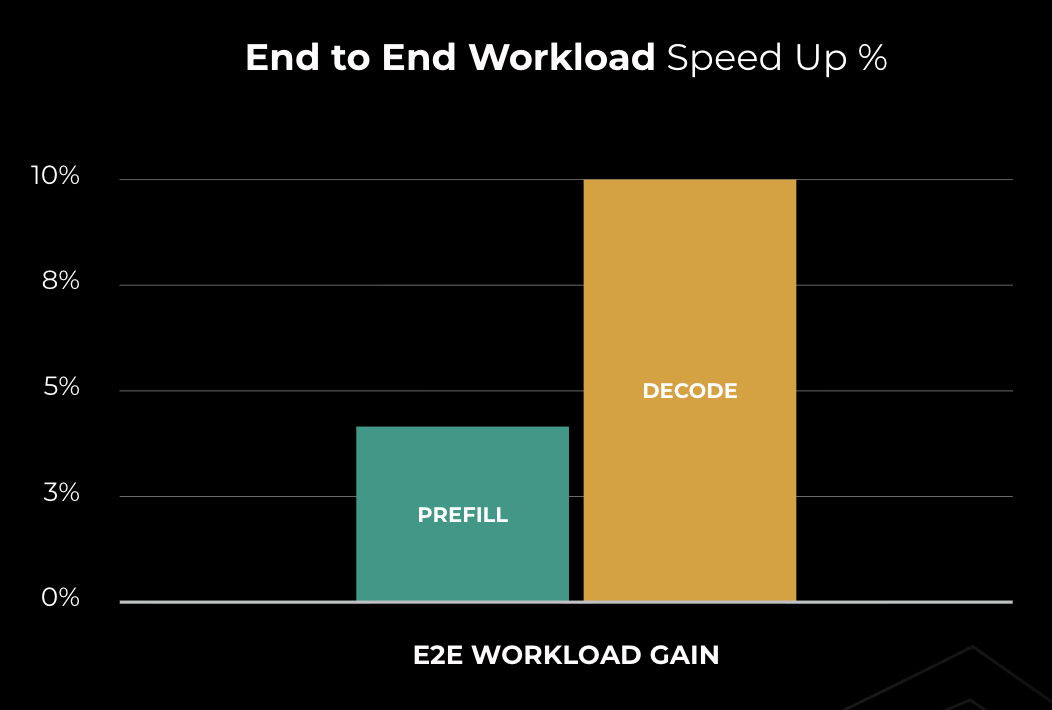

DDA provides substantial performance improvements over baseline communication libraries, particularly on AMD hardware. With AMD MI300X GPUs, DDA surpasses the RCCL baseline by 10-50% for decode (small message sizes) and delivers a 10-30% speedup for prefill. These enhancements have led to an approximate 10% reduction in time-to-incremental-token (TTIT), directly improving the user experience during the crucial decoding phase.

Low-precision Collectives

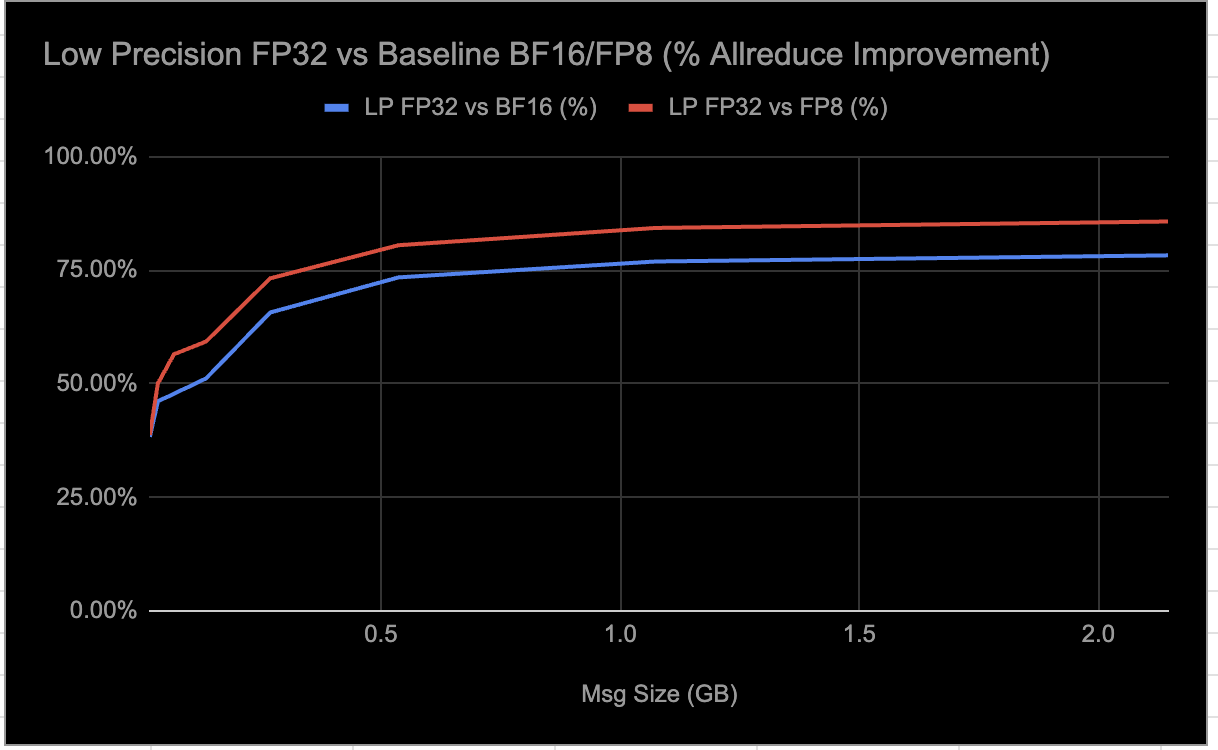

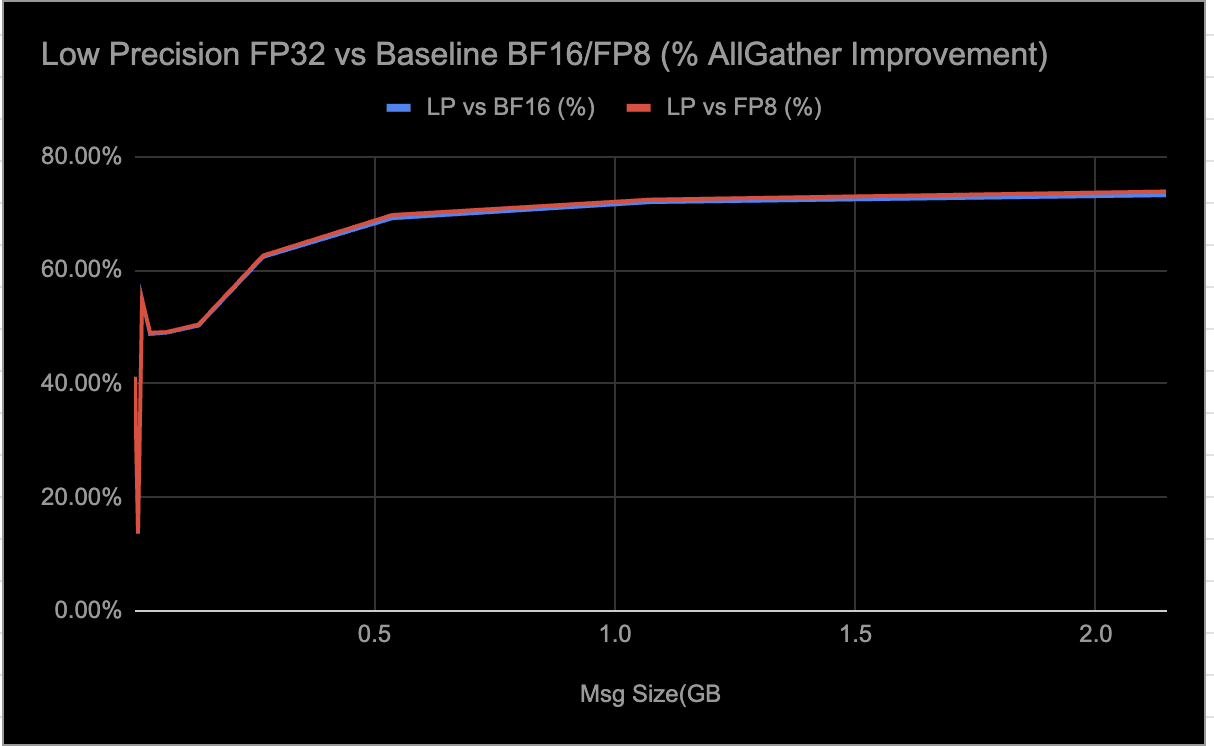

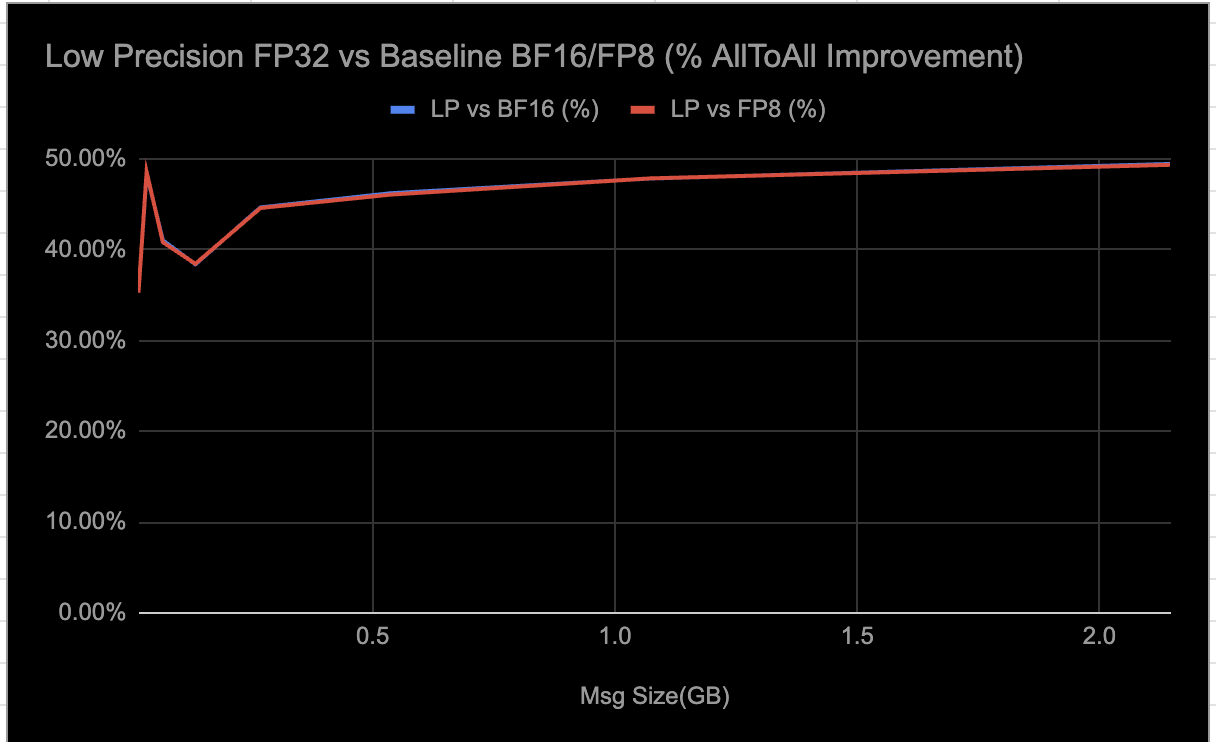

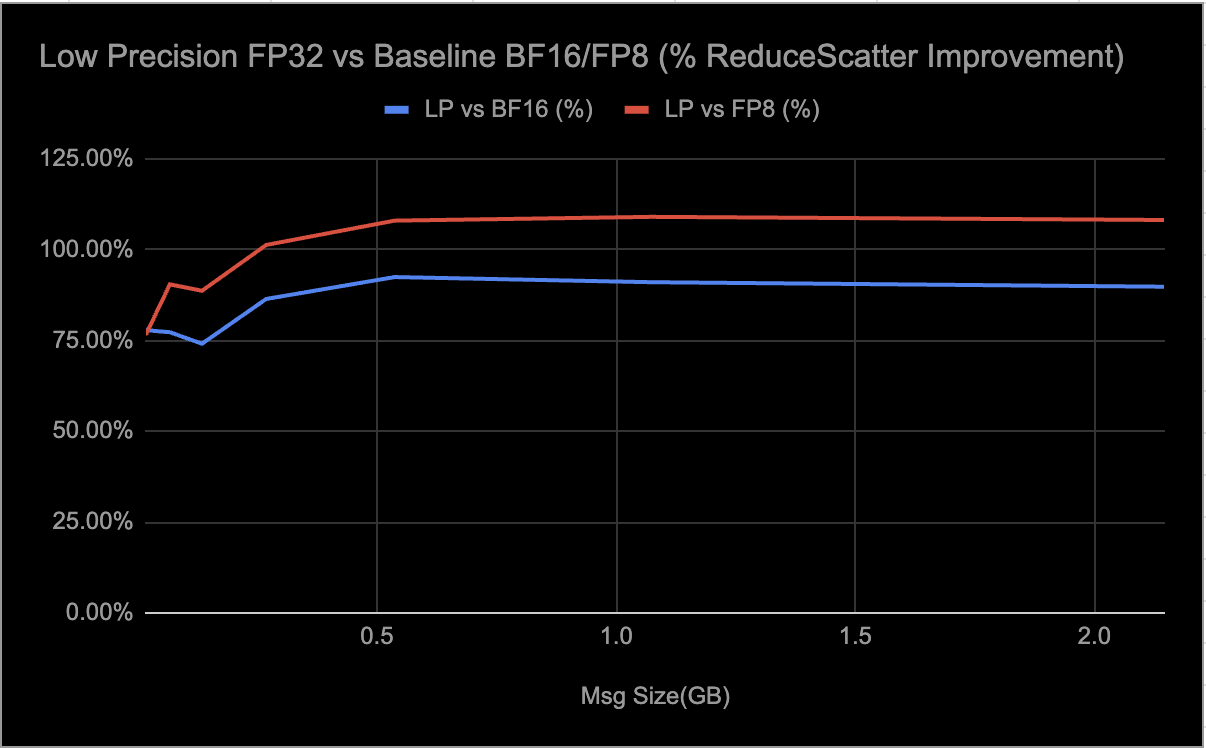

Low-precision (LP) collectives encompass a suite of distributed communication algorithms—including AllReduce, AllGather, AlltoAll, and ReduceScatter—specifically optimized for AMD Instinct MI300/MI350 GPUs. Their purpose is to accelerate AI training and inference workloads. These collectives support both FP32 and BF16 data types, utilizing FP8 quantization to achieve up to 4:1 compression. This significantly reduces communication overhead and enhances scalability and resource utilization for large message sizes (≥16MB).

The algorithms leverage parallel peer-to-peer (P2P) mesh communication, fully exploiting AMD’s Infinity Fabric for high bandwidth and low latency. Crucially, compute steps are executed in high precision (FP32) to ensure numerical stability. Precision loss is primarily determined by the number of quantization operations—typically one or two per data type in each collective—and the data’s ability to be adequately represented within the FP8 range.

By dynamically enabling LP collectives, users can selectively activate these optimizations in end-to-end scenarios where performance gains are most beneficial. Internal experiments have shown significant speedups for FP32 and notable improvements for BF16; it is important to note that these collectives are currently tuned for single-node deployments. The potential impact of reduced precision on numeric accuracy was evaluated and found to provide acceptable numerical accuracy for workloads. This flexible approach allows for maximizing throughput while maintaining acceptable numerical accuracy. LP collectives are now fully integrated and available in RCCLX for AMD platforms; activation is achieved by setting the environment variable RCCL_LOW_PRECISION_ENABLE=1.

MI300 – Float LP AllReduce speedup.

MI300 – Float LP AllReduce speedup. MI300 – Float LP AllGather speedup.

MI300 – Float LP AllGather speedup. MI300 – Float LP AllToAll speedup.

MI300 – Float LP AllToAll speedup. MI300 – Float LP ReduceScatter speedup.

MI300 – Float LP ReduceScatter speedup.

When selectively enabling LP collectives, the following results have been observed from end-to-end inference workload evaluations:

- Approximately ~0.3% delta on GSM8K evaluation runs.

- ~9–10% decrease in latency.

- ~7% increase in throughput.

Throughput measurements, as depicted in the graphs, were obtained using param-bench rccl-tests. For the MI300, tests were conducted on RCCLX built with ROCm 6.4, and for the MI350, on RCCLX built with ROCm 7.0. Each test comprised 10 warmup iterations followed by 100 measurement iterations. The reported results represent the average throughput across these measurement iterations.

Easy adaptation of AI models

RCCLX integrates with the Torchcomms API as a custom backend. The objective is for this backend to achieve feature parity with the NCCLX backend, which is designed for NVIDIA platforms. Torchcomms provides users with a unified API for communication across diverse platforms. This means users can port their applications across AMD or other platforms without altering familiar APIs, even when utilizing the novel features offered by CTran.

RCCLX Quick Start Guide

To install Torchcomms with the RCCLX backend, refer to the installation instructions provided in the Torchcomms repository.

import torchcomms

# Eagerly initialize a communicator using MASTER_PORT/MASTER_ADDR/RANK/WORLD_SIZE environment variables

provided by torchrun.

# This communicator is bound to a single device.

comm = torchcomms.new_comm("rcclx", torch.device("hip"), name="my_comm")

print(f"I am rank {comm.get_rank()} of {comm.get_size()}!")

t = torch.full((10, 20), value=comm.rank, dtype=torch.float)

# run an all_reduce on the current stream

comm.allreduce(t, torchcomms.ReduceOp.SUM, async_op=False)