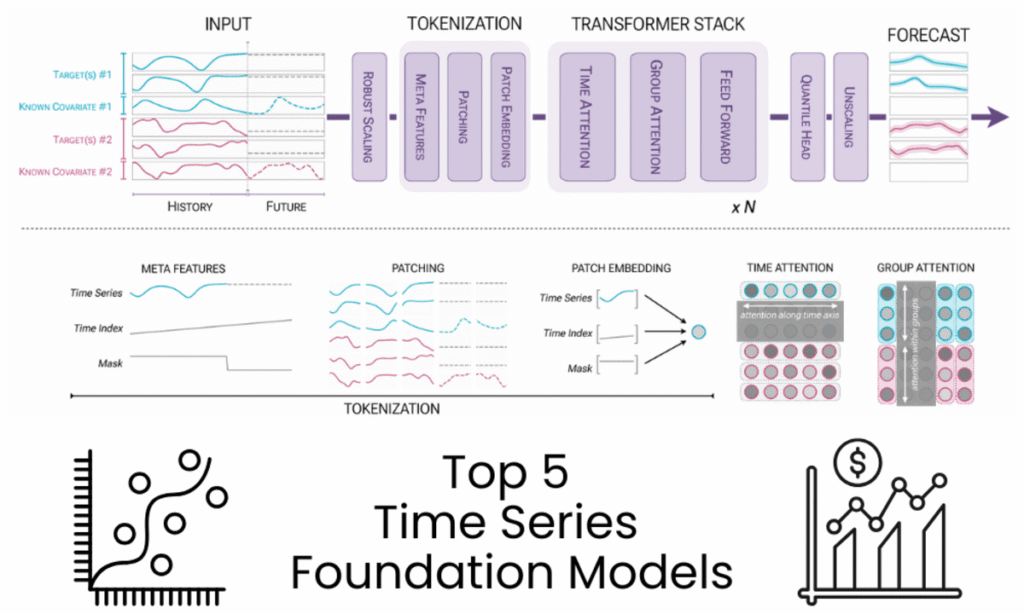

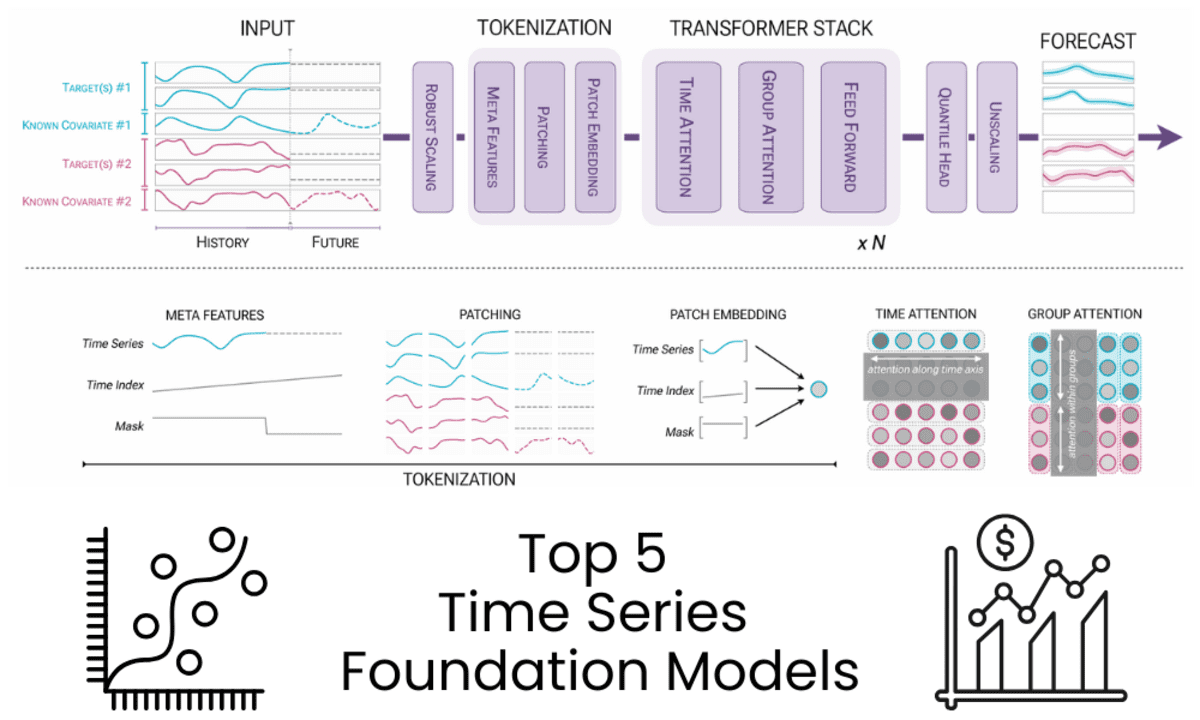

Image by Author | Diagram from Chronos-2: From Univariate to Universal Forecasting

Image by Author | Diagram from Chronos-2: From Univariate to Universal Forecasting

Introduction

Foundation models existed well before the rise of large language models like ChatGPT. Pretrained models had already significantly advanced fields such as computer vision and natural language processing, enabling tasks like image segmentation, classification, and text comprehension.

This paradigm is now transforming time series forecasting. Rather than developing and fine-tuning individual models for every dataset, time series foundation models are pretrained on extensive and varied temporal data. These models can achieve robust zero-shot forecasting performance across different domains, frequencies, and time horizons, frequently rivaling deep learning models that demand extensive training with only historical data.

For those still primarily using classical statistical methods or deep learning models tailored to single datasets, this represents a significant evolution in forecasting system development.

This article examines five time series foundation models, chosen for their performance, popularity (based on Hugging Face downloads), and practical applicability.

1. Chronos-2

Chronos-2 is an encoder-only time series foundation model with 120 million parameters, designed for zero-shot forecasting. It offers support for univariate, multivariate, and covariate-informed forecasting within a unified architecture, providing accurate multi-step probabilistic forecasts without requiring task-specific training.

Key Features:

- Encoder-only architecture inspired by T5

- Zero-shot forecasting with quantile outputs

- Native support for past and known future covariates

- Long context length up to 8,192 and forecast horizon up to 1,024

- Efficient CPU and GPU inference with high throughput

Use Cases:

- Large-scale forecasting across many related time series

- Covariate-driven forecasting, including demand, energy, and pricing

- Rapid prototyping and production deployment without model training

Best Use Cases:

- Production forecasting systems

- Research and benchmarking

- Complex multivariate forecasting with covariates

2. TiRex

TiRex is a pretrained time series forecasting model with 35 million parameters, built on xLSTM. It is designed for zero-shot forecasting across both long and short time horizons, delivering accurate predictions without requiring task-specific data training, and offering both point and probabilistic forecasts.

Key Features:

- Pretrained xLSTM-based architecture

- Zero-shot forecasting without dataset-specific training

- Point forecasts and quantile-based uncertainty estimates

- Strong performance on both long and short horizon benchmarks

- Optional CUDA acceleration for high-performance GPU inference

Use Cases:

- Zero-shot forecasting for new or unseen time series datasets

- Long- and short-term forecasting in finance, energy, and operations

- Fast benchmarking and deployment without model training

3. TimesFM

TimesFM, developed by Google Research, is a pretrained time series foundation model for zero-shot forecasting. Its open checkpoint, timesfm-2.0-500m, is a decoder-only model optimized for univariate forecasting, accommodating long historical contexts and adaptable forecast horizons without specific training.

Key Features:

- Decoder-only foundation model with a 500M-parameter checkpoint

- Zero-shot univariate time series forecasting

- Context length up to 2,048 time points, with support beyond training limits

- Flexible forecast horizons with optional frequency indicators

- Optimized for fast point forecasting at scale

Use Cases:

- Large-scale univariate forecasting across diverse datasets

- Long-horizon forecasting for operational and infrastructure data

- Rapid experimentation and benchmarking without model training

4. IBM Granite TTM R2

Granite-TimeSeries-TTM-R2 represents a collection of compact, pretrained time series foundation models from IBM Research, part of the TinyTimeMixers (TTM) framework. These models are built for multivariate forecasting, demonstrating robust zero-shot and few-shot performance even with parameter counts as low as 1 million, making them ideal for research and environments with limited resources.

Key Features:

- Tiny pretrained models starting from 1M parameters

- Strong zero-shot and few-shot multivariate forecasting performance

- Focused models tailored to specific context and forecast lengths

- Fast inference and fine-tuning on a single GPU or CPU

- Support for exogenous variables and static categorical features

Use Cases:

- Multivariate forecasting in low-resource or edge environments

- Zero-shot baselines with optional lightweight fine-tuning

- Fast deployment for operational forecasting with limited data

5. Toto Open Base 1

Toto-Open-Base-1.0 is a decoder-only time series foundation model specifically developed for multivariate forecasting in observability and monitoring scenarios. It excels with high-dimensional, sparse, and non-stationary data, achieving strong zero-shot performance on major benchmarks like GIFT-Eval and BOOM.

Key Features:

- Decoder-only transformer for flexible context and prediction lengths

- Zero-shot forecasting without fine-tuning

- Efficient handling of high-dimensional multivariate data

- Probabilistic forecasts using a Student-T mixture model

- Pretrained on over two trillion time series data points

Use Cases:

- Observability and monitoring metrics forecasting

- High-dimensional system and infrastructure telemetry

- Zero-shot forecasting for large-scale, non-stationary time series

Summary

The following summarizes the core characteristics of the time series foundation models discussed, highlighting their model size, architecture, and forecasting capabilities.

- Chronos-2: 120M parameters, Encoder-only architecture, supports univariate, multivariate, and probabilistic forecasting. Key strengths include strong zero-shot accuracy, long context and horizon support, and high inference throughput.

- TiRex: 35M parameters, xLSTM-based architecture, supports univariate and probabilistic forecasting. Noted for its lightweight design and strong performance across both short and long horizons.

- TimesFM: 500M parameters, Decoder-only architecture, provides univariate point forecasts. Excels at handling long contexts and flexible horizons at scale.

- Granite TimeSeries TTM-R2: Models range from 1M parameters (small), using focused pretrained architectures, and offer multivariate point forecasts. Highly compact, with fast inference and strong zero-shot and few-shot results.

- Toto Open Base 1: 151M parameters, Decoder-only architecture, supports multivariate and probabilistic forecasting. Optimized for high-dimensional, non-stationary observability data.